官方文档:https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/

一、环境

使用 Kubeadm 部署工具快速安装 Kubernetes v1.22.2。

环境信息表:

| 主机名 | 系统 | 外部IP | 内部IP |

|---|---|---|---|

| master | Centos 7.6.1810 | 10.0.0.100 | 192.168.100.100 |

| node1 | Centos 7.6.1810 | 10.0.0.101 | 192.168.100.101 |

| node2 | Centos 7.6.1810 | 10.0.0.102 | 192.168.100.102 |

注意节点之中是不可以有重复的主机名、MAC 地址或 product_uuid的。

二、基本准备

1、基础准备

关闭防火墙和selinux

systemctl stop firewalld && systemctl disable firewalld

sed -i '/^SELINUX=/c SELINUX=disabled' /etc/selinux/config

setenforce 0关闭swap

swapoff -a

sed -i 's/^.*centos-swap/#&/g' /etc/fstab主机映射

cat << EOF >> /etc/hosts

10.0.0.100 master

10.0.0.101 node1

10.0.0.102 node2

EOF

2、内核参数和模块设置

# 激活 br_netfilter 模块

modprobe br_netfilter

cat << EOF > /etc/modules-load.d/k8s.conf

br_netfilter

EOF

# 内核参数设置:开启IP转发,允许iptables对bridge的数据进行处理

cat << EOF > /etc/sysctl.d/k8s.conf

net.ipv4.ip_forward = 1

net.bridge.bridge-nf-call-iptables = 1

net.bridge.bridge-nf-call-ip6tables = 1

EOF

# 立即生效

sysctl --system

3、集群时间同步

master节点:

yum install -y chrony

sed -i 's/^server/#&/' /etc/chrony.conf

cat >> /etc/chrony.conf << EOF

#server ntp1.aliyun.com iburst

local stratum 10

allow 10.0.0.0/24

EOF

systemctl restart chronyd

systemctl enable chronydnode节点:

yum install -y chrony

sed -i 's/^server/#&/' /etc/chrony.conf

cat >> /etc/chrony.conf << EOF

server 10.0.0.100 iburst

EOF

systemctl restart chronyd

systemctl enable chronyd

二、安装docker

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.repo

yum install -y docker-ce

systemctl enable docker && systemctl start docker 配置 docker 的镜像加速器、cgroup 驱动及存储驱动程序。加速器请自行去阿里云获取。

Kubernetes 推荐使用 systemd 来代替 cgroupfs。因为 docker 容器默认 cgroup 驱动为 cgroupfs,而 kubelet 默认为 systemd,所以为了使系统更为稳定,容器和 kubelet 应该都使用 systemd 作为 cgroup 驱动。

cat << EOF > /etc/docker/daemon.json

{

"registry-mirrors": ["https://xxxxx.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"]

}

EOF

systemctl daemon-reload

systemctl restart docker

三、安装 kubeadm 和相关工具

1、配置yum源

不过由于官方源位于国外,可能无法访问或者速度超慢,可以使用国内的 yum 源像阿里源、清华大学源等。

这里使用阿里源:

cat << EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

2、安装 kubeadm 工具

这里我们注意,如果是使用阿里源。由于官网未开放同步方式, 可能会有索引 gpg 检查失败的情况,这时要使用 yum install -y --nogpgcheck kubelet kubeadm kubectl 安装。

yum install -y kubelet kubeadm kubectl

systemctl enable kubelet && systemctl start kubelet配置 kubeadm 和 kubectl 命令 bash 自动补全。

kubeadm completion bash > /etc/bash_completion.d/kubeadm

kubectl completion bash >/etc/bash_completion.d/kubectl

source /etc/bash_completion.d/kubeadm

source /etc/bash_completion.d/kubectl

四、下载 kubernetes 相关镜像

使用 kubeadm 工具安装,会自动从 k8s.gcr.io 下载 k8s 的相关镜像。但是此站点位于国外会出现无法访问或者速度缓慢的情况,会出现拉取不到镜像导致安装失败的情况。

因此我们提前准备好镜像,可以是提前下载好导入、使用其它国内镜像源、自建仓库拉取镜像。

这里我选择到使用阿里云自建仓库,因为它支持使用海外机房构建镜像,构建成功后会推送至指定地域。自己到阿里云自建仓库构建或者用我的都行。

[root@master ~]# kubeadm config images list # 查看需要下载的相关镜像

k8s.gcr.io/kube-apiserver:v1.22.2

k8s.gcr.io/kube-controller-manager:v1.22.2

k8s.gcr.io/kube-scheduler:v1.22.2

k8s.gcr.io/kube-proxy:v1.22.2

k8s.gcr.io/pause:3.5

k8s.gcr.io/etcd:3.5.0-0

k8s.gcr.io/coredns/coredns:v1.8.4

[root@master ~]# kubeadm config images list --image-repository=registry.cn-qingdao.aliyuncs.com/k8s-images1

registry.cn-qingdao.aliyuncs.com/k8s-images1/kube-apiserver:v1.22.2

registry.cn-qingdao.aliyuncs.com/k8s-images1/kube-controller-manager:v1.22.2

registry.cn-qingdao.aliyuncs.com/k8s-images1/kube-scheduler:v1.22.2

registry.cn-qingdao.aliyuncs.com/k8s-images1/kube-proxy:v1.22.2

registry.cn-qingdao.aliyuncs.com/k8s-images1/pause:3.5

registry.cn-qingdao.aliyuncs.com/k8s-images1/etcd:3.5.0-0

registry.cn-qingdao.aliyuncs.com/k8s-images1/coredns:v1.8.4当然可以用直接用这个源:

[root@master ~]# kubeadm config images list --kubernetes-version 1.22.42 --image-repository=registry.aliyuncs.com/google_containers

registry.aliyuncs.com/google_containers/kube-apiserver:v1.22.2

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.22.2

registry.aliyuncs.com/google_containers/kube-scheduler:v1.22.2

registry.aliyuncs.com/google_containers/kube-proxy:v1.22.2

registry.aliyuncs.com/google_containers/pause:3.5

registry.aliyuncs.com/google_containers/etcd:3.5.0-0

registry.aliyuncs.com/google_containers/coredns:v1.8.4

五、安装 master 节点

初始化 kubernetns master 节点

[root@master ~]# kubeadm init --image-repository=registry.cn-qingdao.aliyuncs.com/k8s-images1 --kubernetes-version=v1.22.2 --service-cidr=10.1.0.0/16 --pod-network-cidr=10.244.0.0/16

选项说明:

--image-repository:选择用于拉取镜像的镜像仓库(默认为“k8s.gcr.io” )

--kubernetes-version:选择特定的Kubernetes版本(默认为“stable-1”)

--service-cidr:为服务的VIP指定使用的IP地址范围(默认为“10.96.0.0/12”)

--pod-network-cidr:指定Pod网络的IP地址范围。如果设置,则将自动为每个节点分配CIDR。

注:

因为后面要部署 flannel,参照flannel文档,我们要指定Pod网络的IP地址范围为10.244.0.0/16输出内容,可以看到初始化成功的信息和一些提示。

......

Your Kubernetes control-plane has initialized successfully!

To start using your cluster, you need to run the following as a regular user:

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

Alternatively, if you are the root user, you can run:

export KUBECONFIG=/etc/kubernetes/admin.conf

You should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:

https://kubernetes.io/docs/concepts/cluster-administration/addons/

Then you can join any number of worker nodes by running the following on each as root:

kubeadm join 10.0.0.100:6443 --token xeqz0h.w0vp4e1vjnt2zs1v \

--discovery-token-ca-cert-hash sha256:77363daea05baa04513f4317d69d783c785f6c58a8212c3238cad52040ca5dbe 上面提示内容:

# 要开始使用集群,您需要以常规用户身份运行以下命令

mkdir -p $HOME/.kube

sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

sudo chown $(id -u):$(id -g) $HOME/.kube/config

# 或者,如果您是root用户,则可以运行允许命令

export KUBECONFIG=/etc/kubernetes/admin.conf

以上两步我们都做。

第三个提示内容是关于网络的。

最后一个提示内容是关于Node节点加入集群的,我们可以运行给出的命令加Node加入集群。命令我们先记录下来,等下要用。 注意,如果安装出错或者失败,可以通过执行 kubeadm reset 命令将主机恢复原状,重新执行 kubeadm init 再次安装。

至此我们完成了 Kubernetes master 节点的安装,但是集群内还没有可用的工作Node,并且缺乏容器网络的配置。

六、安装网络插件

Kubernetes 需要使用第三方的网络插件来实现 Kubernetes 的网络功能,这样一来,安装网络插件成为必要前提。网络插件有多种选择,可以参考:https://kubernetes.io/docs/concepts/cluster-administration/addons/

这里使用 flannel:(https://github.com/flannel-io/flannel)

# 安装网络插件

[root@master ~]# kubectl apply -f https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

# 查看flannel状态

[root@master ~]# kubectl get pods -A | grep flannel

kube-system kube-flannel-ds-kssdv 1/1 Running 0 17s直接运行以上命令,flannel 状态可能会没有起来,要么就是镜像拉取慢或者拉取失败。如下:

[root@master ~]# kubectl get pods -A | grep flannel

kube-system kube-flannel-ds-jmdhd 0/1 Init:ImagePullBackOff 0 12m

root@master ~]# kubectl describe pod kube-flannel-ds-jmdhd -n kube-system

......

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 23m default-scheduler Successfully assigned kube-system/kube-flannel-ds-jmdhd to master

Normal Pulling 9m32s (x4 over 23m) kubelet Pulling image "rancher/flannel-cni-plugin:v1.2"

Warning Failed 5m38s (x4 over 19m) kubelet Failed to pull image "rancher/flannel-cni-plugin:v1.2": rpc error: code = Unknown desc = pull access denied for rancher/flannel-cni-plugin, repository does not exist or may require 'docker login': denied: requested access to the resource is denied

Warning Failed 5m38s (x4 over 19m) kubelet Error: ErrImagePull

Normal BackOff 5m5s (x8 over 19m) kubelet Back-off pulling image "rancher/flannel-cni-plugin:v1.2"

Warning Failed 5m5s (x8 over 19m) kubelet Error: ImagePullBackOff这种情况,我们可以看看是需要哪些镜像,可以手动拉取试试。不行就阿里云自建仓库将镜像构建好,拉取打个标签,再执行安装插件即可。也可以直接下载镜像导入,地址:https://github.com/flannel-io/flannel/releases。

[root@master ~]# wget https://raw.githubusercontent.com/coreos/flannel/master/Documentation/kube-flannel.yml

[root@master ~]# cat kube-flannel.yml | grep image

image: rancher/flannel-cni-plugin:v1.2

image: quay.io/coreos/flannel:v0.15.0-rc1

image: quay.io/coreos/flannel:v0.15.0-rc1

七、添加 Node 节点到集群

Node节点以上一、二、三的步骤操作都做。做好后,执行初始化 master 节点时的最后一个提示内容的命令。

[root@node1 ~]# [root@node1 ~]# kubeadm join 10.0.0.100:6443 --token xeqz0h.w0vp4e1vjnt2zs1v --discovery-token-ca-cert-hash sha256:77363daea05baa04513f4317d69d783c785f6c58a8212c3238cad52040ca5dbe

八、验证

[root@master ~]# kubectl get nodes # 列出所有节点,在最后加上“-o wide” 可以显示节点的更多信息

NAME STATUS ROLES AGE VERSION

master Ready control-plane,master 23m v1.22.2

node1 NotReady <none> 34s v1.22.2

node2 NotReady <none> 31s v1.22.2

[root@master ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system coredns-664d5f5d45-dp9rd 1/1 Running 0 37m

kube-system coredns-664d5f5d45-frlrc 1/1 Running 0 37m

kube-system etcd-master 1/1 Running 0 38m

kube-system kube-apiserver-master 1/1 Running 0 38m

kube-system kube-controller-manager-master 1/1 Running 0 38m

kube-system kube-flannel-ds-488r9 1/1 Running 0 36m

kube-system kube-flannel-ds-9jfbk 1/1 Running 0 14m

kube-system kube-flannel-ds-gftxx 1/1 Running 0 14m

kube-system kube-proxy-5cc44 1/1 Running 0 37m

kube-system kube-proxy-q6j4h 1/1 Running 0 14m

kube-system kube-proxy-rsdpq 1/1 Running 0 14m

kube-system kube-scheduler-master 1/1 Running 0 38m测试集群DNS:

[root@master ~]# kubectl run test1 -it --rm --image=busybox:1.28.3

If you don't see a command prompt, try pressing enter.

/ # nslookup kubernetes

Server: 10.1.0.10

Address 1: 10.1.0.10 kube-dns.kube-system.svc.cluster.local

Name: kubernetes

Address 1: 10.1.0.1 kubernetes.default.svc.cluster.local部署服务验证集群:

[root@master ~]# kubectl create deployment nginx --image=nginx

deployment.apps/nginx created

[root@master ~]# kubectl expose deployment nginx --port=80 --type=NodePort

service/nginx exposed

[root@master ~]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.1.0.1 <none> 443/TCP 3m10s

nginx NodePort 10.1.54.127 <none> 80:32756/TCP 10s

[root@master ~]# curl 10.1.54.127:80

<!DOCTYPE html>

<html>

<head>

<title>Welcome to nginx!</title>

......至此,我们便通过 kubeadm 工具完成了 Kubernetes 集群的快速安装部署。

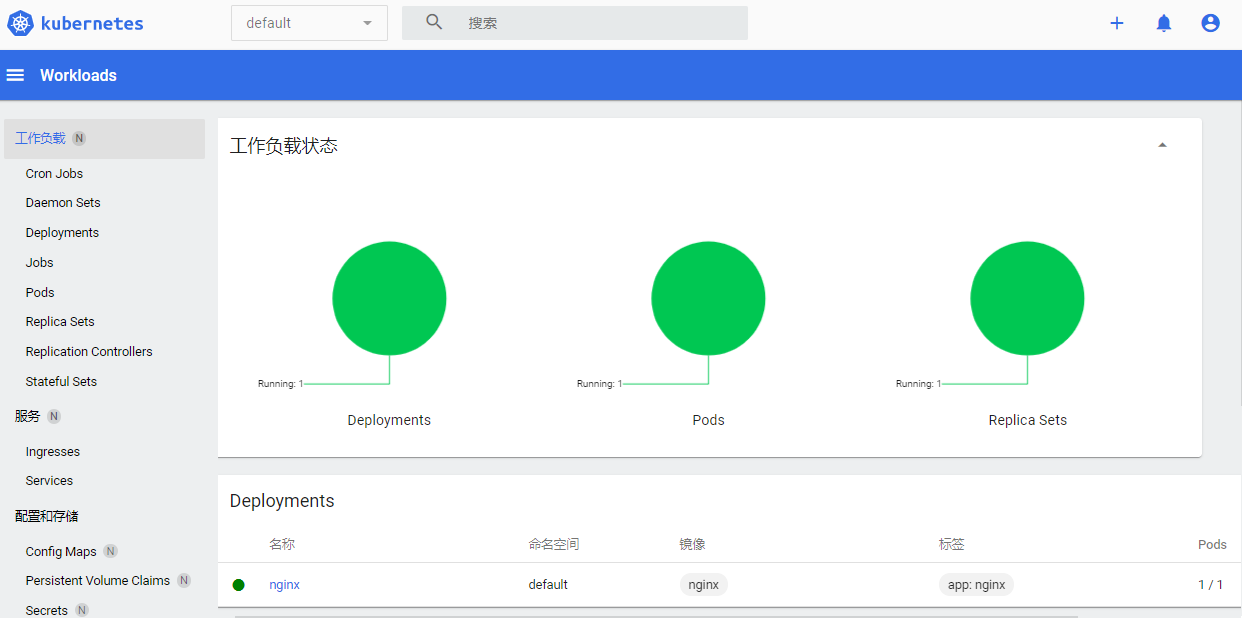

九、部署dashboard

Dashboard 是基于网页的 Kubernetes 用户界面。您可以使用 Dashboard 将容器应用部署到 Kubernetes 集群中,也可以对容器应用排错,还能管理集群本身及其附属资源。您可以使用 Dashboard 获取运行在集群中的应用的概览信息,也可以创建或者修改 Kubernetes 资源(如 Deployment,Job,DaemonSet 等等)。例如,您可以对 Deployment 实现弹性伸缩、发起滚动升级、重启 Pod 或者使用向导创建新的应用。

安装dashboard:(https://github.com/kubernetes/dashboard)

[root@master ~]# kubectl apply -f https://raw.githubusercontent.com/kubernetes/dashboard/v2.3.1/aio/deploy/recommended.yaml

[root@master ~]# kubectl get pods -n kubernetes-dashboard

NAME READY STATUS RESTARTS AGE

dashboard-metrics-scraper-856586f554-8jm2p 1/1 Running 0 53s

kubernetes-dashboard-67484c44f6-kp7p6 1/1 Running 0 53s

[root@master ~]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.1.247.165 <none> 8000/TCP 3m57s

kubernetes-dashboard ClusterIP 10.1.205.169 <none> 443/TCP 3m57s

# 修改对外暴露端口

[root@master ~]# kubectl edit svc -n kubernetes-dashboard kubernetes-dashboard

将 type: ClusterIP 修改为 type: NodePort 即可

[root@master ~]# kubectl get svc -n kubernetes-dashboard

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

dashboard-metrics-scraper ClusterIP 10.1.247.165 <none> 8000/TCP 11m

kubernetes-dashboard NodePort 10.1.205.169 <none> 443:32050/TCP 11m使用浏览器访问:

创建服务用户,集群角色绑定,然后获取token。

[root@master ~]# vim token.yaml

apiVersion : v1

kind : ServiceAccount

metadata :

name : admin-user

namespace : kubernetes-dashboard

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:

name: admin-user

roleRef:

apiGroup: rbac.authorization.k8s.io

kind: ClusterRole

name: cluster-admin

subjects:

- kind: ServiceAccount

name: admin-user

namespace: kubernetes-dashboard

[root@master ~]# kubectl apply -f token.yaml

serviceaccount/admin-user created

clusterrolebinding.rbac.authorization.k8s.io/admin-user created获取令牌:

[root@master ~]# kubectl -n kubernetes-dashboard get secret $(kubectl -n kubernetes-dashboard get sa/admin-user -o jsonpath="{.secrets[0].name}") -o go-template="{{.data.token | base64decode}}"

eyJhbGciOiJSUzI1NiIsImtpZCI6Ijc4UmVuZl94SHdBaVMwSE5VWE84MERveVgtYkNiN2R1MkxPeXppYm5EaGMifQ.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlcm5ldGVzLWRhc2hib2FyZCIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLTJmcXZoIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiI3Y2MxZWU2ZS1iZjZlLTRkYWQtYjg2ZC1iN2RhNGFhOThlZWYiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZXJuZXRlcy1kYXNoYm9hcmQ6YWRtaW4tdXNlciJ9.IolwYalU9RlREIC0nfHjRCkmK-GYtse8JynfzRYsr0b_x6wwhWJG6dn2c_AWlqst9hIDWwp_fXUPNoyOtiHmp2gup48u0RXBgBbXjOxD3AvjaJL__FmTgHiNe4m8nlSZ5_th5m4SvlnIzk3imzusJSEJuDBC5OtFRUPIynriGTH8OJooeQWwxqRzSadUpMmBtAu89azozK3fE6JQ_mVmtpxaOyNFzzAMSsVmwvrlUep4sd1miE0viCaKTXe-hplSMLhjf_NOfeBiEL7MTwIu6CU0U0zUdNaL-g9yNRdKpOG6oCy_xGZvn3e7PrzryiSbYWVkGMiM0cI0CZju7cnyOQ将获取到的 token 填入登录:

直接成功

强